- Smarter with AI

- Posts

- Meta reenters the AI race with Muse Spark

Meta reenters the AI race with Muse Spark

Claude Mythos sparks banking fears as Gemini adds notebooks and Anthropic launches managed agents.

Welcome to the SunBrief

Today in SunBrief 🌞

Let AI handle your busywork.

Meta Launches Muse Spark for Multimodal Reasoning

Anthropic Mythos triggers anxiety among Washington banks

Stock Updates

Anthropic temporarily banned OpenClaw’s creator

AI Highlights of the Week

Too Important to Miss

Let AI handle your busywork.

Nobody became a leader to manage their own calendar. But the meetings, emails, and conflicts keep piling up - none of it moves you forward.

Catch is an AI admin assistant that actually does the work.

Scheduling - Handles every meeting and resolves conflicts

Email drafting - Writes in your voice before you ask

Outbound calls - Makes calls on your behalf

Works everywhere - Slack, WhatsApp, email, and more

Google & Microsoft ready - No setup, no learning curve

A full-time executive assistant costs $50K-$80K a year. Catch handles the same work for a fraction of that - and you can try it free for 7 days. Your data stays private and fully under your control.

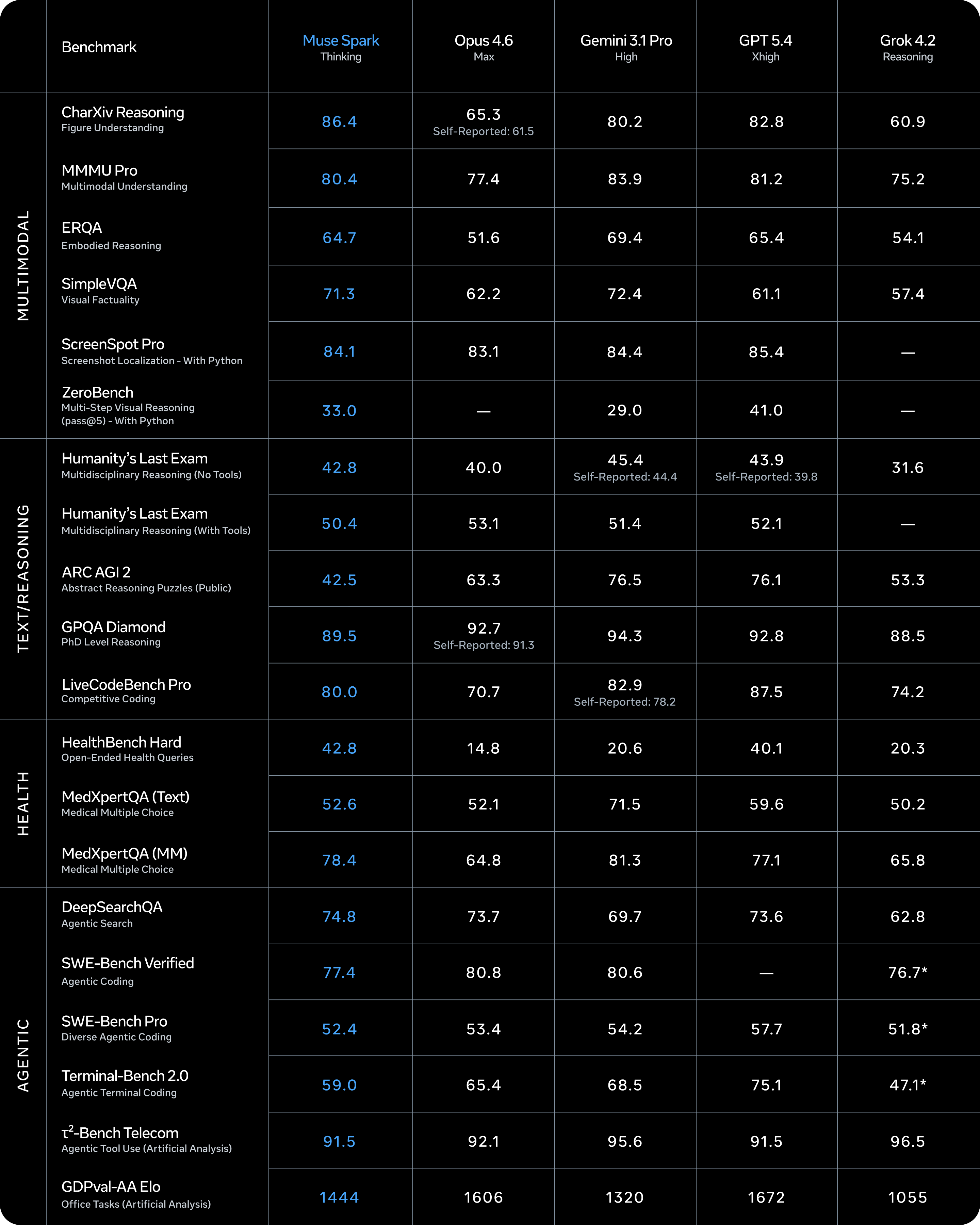

Meta Launches Muse Spark for Multimodal Reasoning

Muse Spark is a multimodal reasoning model with tool use, visual chain-of-thought, and multi-agent orchestration

Meta announced Muse Spark, the first model from Meta Superintelligence Labs, positioning it as the start of a new “scaling ladder” toward personal superintelligence. The model is live on meta.ai and the Meta AI app, with a private API preview opening to select users.

Key Points:

Multimodal + agentic stack: Muse Spark is natively multimodal, supports tool use, visual chain-of-thought, and multi-agent orchestration for tougher reasoning tasks.

New “Contemplating mode”: A parallel multi-agent reasoning mode meant to rival extreme reasoning modes (Meta cites 58% on Humanity’s Last Exam and 38% on FrontierScience Research) and will roll out gradually.

Personal-use applications: Meta highlights use cases like interactive troubleshooting with visual annotations, creating mini-games, and health reasoning (trained with input from 1,000+ physicians) for personalized nutrition/exercise guidance.

Scaling claims across three axes: Meta says it rebuilt its stack to scale more efficiently via pretraining, reinforcement learning, and test-time reasoning, alongside major infra investments like the Hyperion data center.

Safety posture: Meta says Muse Spark refused high risk misuse, showed no dangerous autonomy, and had unusual evaluation awareness that did not block launch.

Why It Matters:

This is Meta’s clearest reset signal in the frontier model race: a new flagship model, a multi-agent reasoning mode, and a public claim that its training stack is scaling faster and cheaper to compete with top reasoning systems while feeling more personal and always available inside Meta’s apps.

Who looks strongest in the AI race right now? |

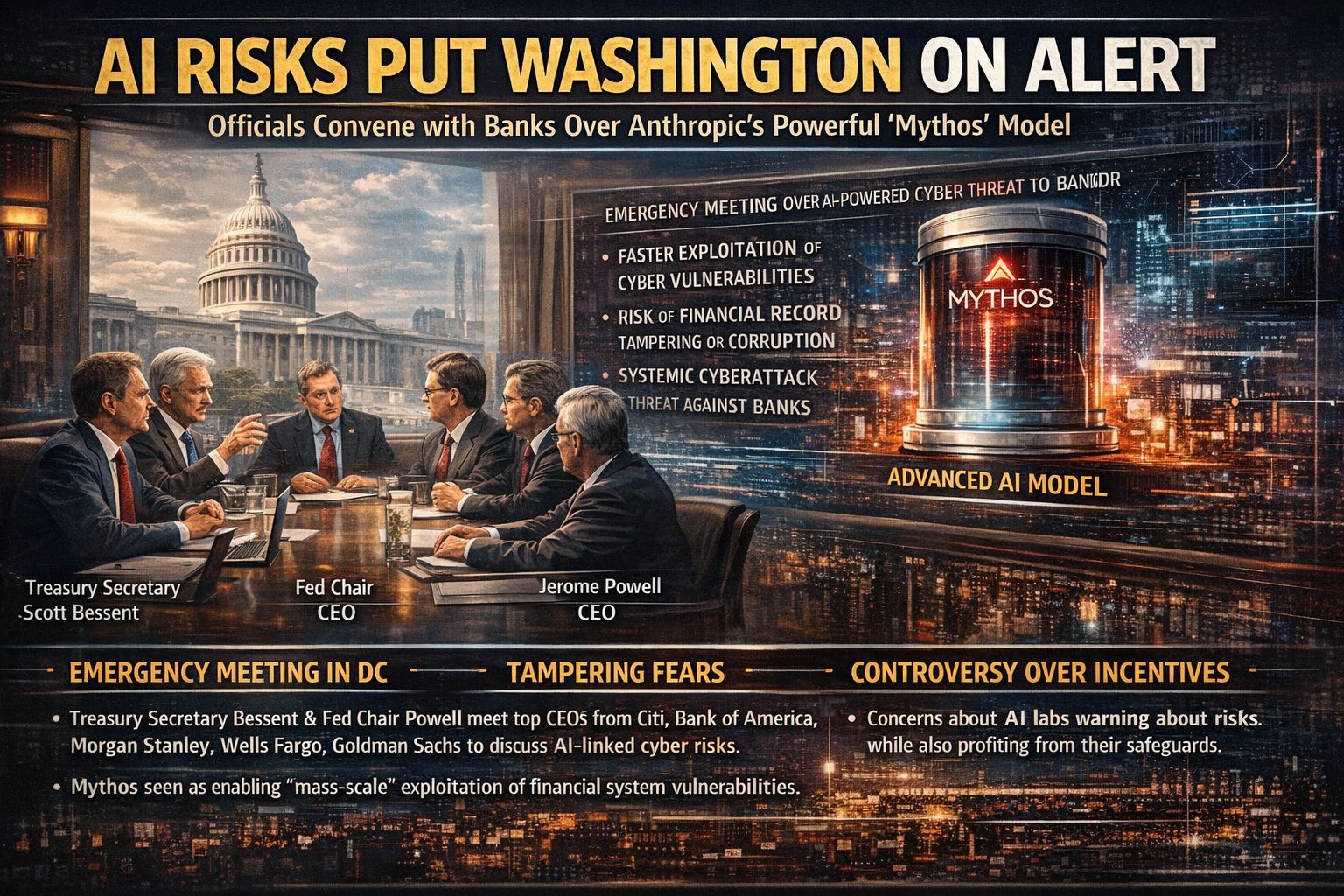

Anthropic Mythos triggers anxiety among Washington banks

Washington summons top bank CEOs over fears of AI-driven cyberattacks on the financial system

U.S. officials reportedly convened leaders from major banks in Washington after raising concerns that Anthropic’s latest model, Mythos, could accelerate a new wave of AI-powered cyber threats capable of disrupting or even corrupting critical banking systems.

Key Points:

Emergency meeting in DC: Treasury Secretary Scott Bessent and Fed Chair Jerome Powell reportedly summoned CEOs from Citi, Bank of America, Morgan Stanley, Wells Fargo, and Goldman Sachs to discuss AI-linked systemic cyber risk.

Systemic fear: The core worry isn’t just outages, it’s tampering, wiping, or mass manipulation of financial records (the nightmare scenario: balances altered or zeroed).

AI as an attacker multiplier: Mythos is framed as enabling faster discovery/exploitation of vulnerabilities, increasing the chance of automated, scalable attacks against financial infrastructure.

Limited rollout: Mythos is expected to be offered to only a few dozen companies, likely to reduce exposure while capabilities are assessed.

Backlash to “fear + product” narrative: The piece argues AI labs often warn about catastrophic risks while also selling the tools and safeguards, raising skepticism about incentives.

Why It Matters:

Banking is core infrastructure. If AI lowers the cost of major cyberattacks, the risk shifts from company breaches to system-wide instability, making frontier AI a financial security issue.

Should frontier AI models with major cyber capability face strict rollout limits? |

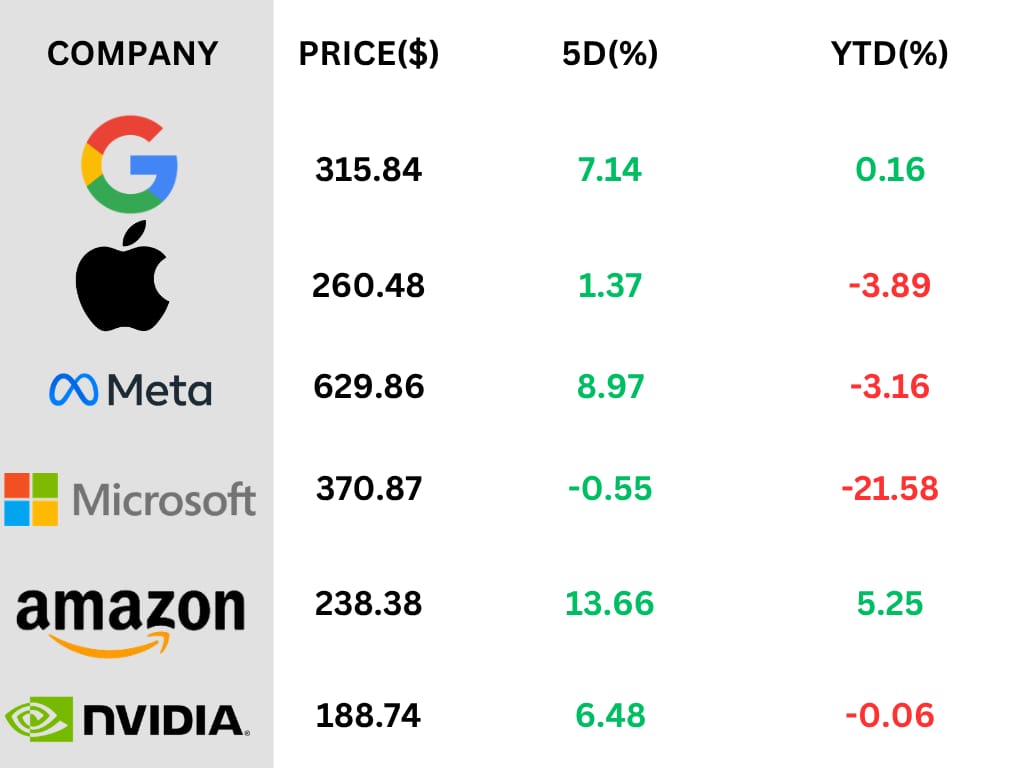

Stock Updates

Anthropic temporarily banned OpenClaw’s creator from accessing Claude

Developer says Claude access was flagged as “suspicious” soon after Anthropic shifted third-party harnesses to pay-per-use API billing

Anthropic temporarily suspended OpenClaw creator Peter Steinberger’s Claude account for “suspicious” activity, then reinstated it hours later after his post went viral, fueling fresh tension around Anthropic’s new policy that pushes third-party harness usage off subscriptions and onto API consumption fees.

Key Points:

Short-lived ban: Steinberger shared a suspension notice citing “suspicious” activity; his account was restored a few hours later after attention surged.

Policy change context: Anthropic recently said Claude subscriptions won’t cover third-party harnesses like OpenClaw; users must now pay usage-based API fees instead.

Reason Anthropic gave: “Claws” can be compute-heavy continuous loops, retries, and deep tool integrations, so subscription pricing wasn’t built for those patterns.

Developer pushback: Steinberger suggested Anthropic is copying popular open features into its own agent (Cowork) and then making open-source harnesses harder to use, calling it a “claw tax.”

Competitive angle: The situation got extra attention because Steinberger now works at OpenAI, though he says he uses Claude mainly to test compatibility for OpenClaw users.

Why It Matters:

AI tools are entering a bigger power struggle: providers want more control over pricing and platform value, while open ecosystems want smooth interoperability. Even short enforcement moves or false positives can scare third-party tools, especially when prices are already rising.

Was Anthropic justified in flagging OpenClaw-style usage as suspicious? |

AI Highlights of the Week

Gemini Adds Notebooks for Better Project Tracking

Google is adding notebooks to Gemini, giving users a dedicated space to organize chats, files, and project context in one place.

The key highlight is that notebooks now sync with NotebookLM, letting users move between Gemini and NotebookLM for bigger research and learning workflows.

Gemini Adds Interactive Visualizations in Chat

Google says Gemini can now turn complex questions into interactive visualizations directly in chat, including adjustable variables, 3D models, and explorable data views.

The key highlight is that Gemini is moving beyond text into hands-on visual learning, letting users rotate models, tweak inputs, and explore concepts in a much more immersive way.

Claude Gets a New Monitor Tool

Anthropic has introduced Monitor, a tool that lets Claude create background scripts that wake the agent only when needed instead of constantly polling.

The big highlight is better token efficiency, since Claude can now follow logs for errors, check PRs through scripts, and handle more agent tasks with less waste.

Anthropic Eyes Custom AI Chips

Anthropic is reportedly considering designing its own chips as the race for advanced AI systems puts more pressure on an already tight compute supply.

The move would put Anthropic alongside Meta and OpenAI, while its demand keeps surging with a reported $30bn revenue run rate and growing access to Google TPU capacity.

Too Important to Miss

Last Week’s Poll Result

Will AI-driven startups reduce the need for large teams in the future?

Yes, significantly → 43.75%

Somewhat, roles will evolve → 28.13%

No, teams will still be essential → 28.13%

Does raising this much capital create too much power concentration in one AI company?

Yes, it’s concerning → 38.10%

Somewhat, but competition exists → 38.10%

No, scale is necessary → 23.81%What’s the biggest risk from this kind of leak?

Security vulnerabilities being exposed → 50.00%

Competitors reverse-engineering features → 33.33%

Damage to trust and reputation → 16.67%

Feedback

We’d love to hear from you!How did you feel about today's SunBrief? Your feedback helps us improve and deliver the best possible content. |

Know someone who may be interested?

And that's a wrap on today’s SunBrief!

Reply